The Organizational Cost of AI-Driven Productivity: A Consulting Perspective on the Pressures of Higher Output

Table of Contents

Key Takeaways

- AI productivity in consulting is increasing output, but higher expectations are expanding workload rather than reducing it

- The focus has shifted from using AI to proving measurable impact and improving decision quality in client delivery

- AI-generated outputs require structured validation, making quality assurance a core capability rather than a final step

- The consulting pyramid is evolving into a more vertical, AI-enabled model, requiring workflow redesign rather than just tool adoption

AI is delivering exactly what consulting firms have been chasing for decades: speed, scale, and efficiency. Research that once took months is completed in weeks. Detailed syntheses are drafted in seconds. Entire analyses are generated on demand. By every measurable metric, productivity is up.

Inside consulting teams, the experience of work is moving in the opposite direction. Deadlines feel tighter. Expectations are higher. Work is more fragmented, more intense, and paradoxically, more exhausting.

Adoption data reinforces the trajectory. McKinsey’s State of AI in 2025 shows that 91% of organizations in professional services, covering management consulting, market research, product research, and legal services, are using AI in at least one business function. AI is no longer confined to experimentation, it is embedded across core workflows, from knowledge management to product and service development, marketing, and operations. For consulting firms, the signal is unambiguous: AI is no longer a pilot, it is a permanent delivery layer. The commercial, talent, and delivery pressures are now compounding.

What this has produced is a structural shift in how consulting work operates. As AI removes friction from execution, it reshapes workload, often in ways organizations are not yet designed to absorb. The result is a growing disconnect between measured productivity and experienced pressure. This article examines why that gap exists, what it costs, and how consulting leaders can capture AI productivity without burning out their teams.

AI Is Raising the Bar for Impact, Not Just Efficiency

As AI adoption accelerates, consulting teams are under growing pressure to translate its potential into measurable impact. Clients expect clear outcomes, faster results, and tangible returns on investment. The burden of proof has shifted from whether AI is being used to whether it is shortening delivery cycles, improving decision quality, and delivering measurable business outcomes.

This shift is redefining how consulting work is evaluated. Efficiency alone is no longer sufficient. Teams are expected to demonstrate that AI-assisted work is materially better — faster, cheaper and still insightful. In practice, this raises the standard for what constitutes quality, increases scrutiny on deliverables, and reinforces accountability for outcomes. The ability to use AI effectively is quickly becoming a baseline expectation rather than a differentiator.

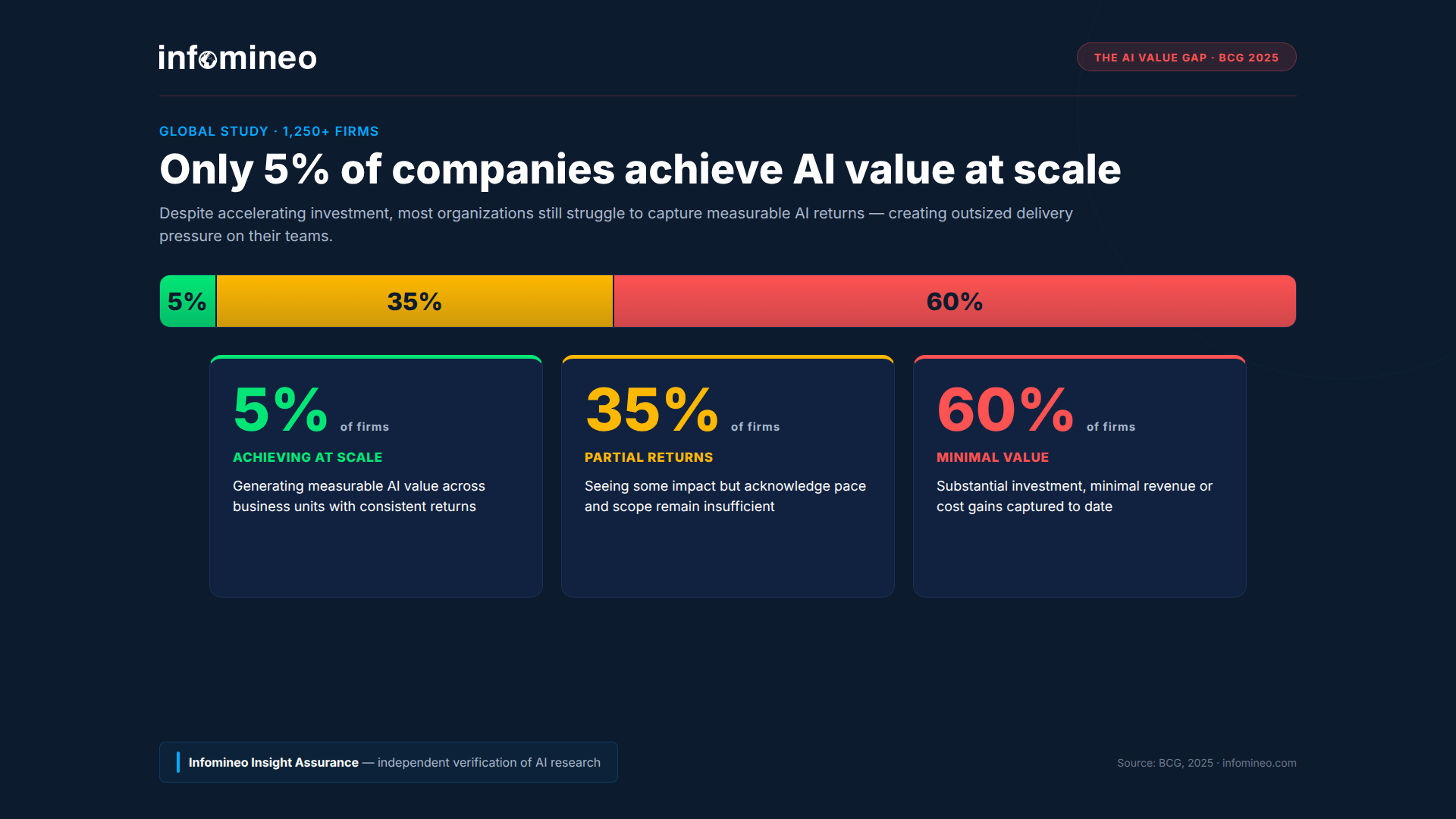

The market reality is harsher than the adoption narrative suggests. According to BCG’s 2025 cross-industry study on the AI value gap, only 5% of companies are achieving AI value at scale. A full 60% are generating little to no material value, reporting minimal revenue or cost gains despite substantial investment. The remaining 35% are seeing some returns but acknowledge they are not moving far enough or fast enough.

For consulting firms, this gap compounds the pressure from clients with internal expectations to translate AI investment into firm-wide ROI, not just project-level efficiency. When results are difficult to measure or slower to materialize, teams face increasing scrutiny to justify the value of AI-driven work. In this context, the reliability of underlying insights becomes critical to both delivery quality and the ability to demonstrate impact.

Productivity Gains Are Translating into More Work

As AI enables faster turnaround, consulting teams are not simply delivering the same work more efficiently. The volume of output itself is increasing, with more analyses, iterations, and deliverables expected within the same time frame.

In parallel, AI expands the range of outputs that can be generated, from spreadsheets and slides to structured analyses. These outputs require review, validation, and refinement before they can be used in client work. In practice, this validation phase can take more time than initially anticipated, particularly when outputs need to be cross-checked, contextualized, or reconciled with client-specific constraints.

As output scales, more of the effort moves toward validation, synthesis, and interpretation, tasks that rely on judgment, context, and accountability. As foundational analytical work is reduced, teams rely less on large junior cohorts and more on smaller, senior-heavy structures, concentrating responsibility at higher levels of the organization.

The result is a structural shift: productivity gains are not absorbed as time saved, but redistributed. Time saved through automation is partially reallocated to reviewing AI-generated outputs for accuracy, and partially redirected toward higher-value activities such as synthesis, client communication, and decision support.

The Hidden Cognitive Cost of AI Workflows

While much of the discussion around AI focuses on productivity and output, its impact on how consultants think and process information is less visible, but equally significant. The following dynamics highlight how AI is reshaping cognitive effort and decision-making in consulting workflows.

Speed Is Intensifying Mental Effort and Compressing Critical Thinking

At first glance, AI simplifies consulting work. In practice, it changes how thinking happens. Consultants are no longer working through tasks in a linear way. Instead, they move between prompts, outputs, revisions, and tools. Work becomes iterative, non-linear, and fragmented. Each step requires interpretation to decide what to keep, what to discard, and what to refine.

This constant context switching comes at a cognitive cost. Focus becomes harder to maintain, and mental fatigue builds more quickly, even as tasks are completed faster.

As more analysis is generated in less time, fewer natural pauses remain to challenge assumptions, explore alternatives, and test conclusions, compressing critical thinking into shorter, more reactive cycles. AI-generated outputs can also introduce subtle errors or overconfident conclusions that require careful scrutiny, making sustained judgment more difficult.

The result is a compounding effect: work becomes more mentally demanding while reducing the time available for reflection, creating a persistent tension between execution and judgment.

This erosion of critical thinking is not confined to consulting. Research published by the MIT Media Lab in November 2025 warned that heavy reliance on AI tools can dull independent reasoning, particularly when users defer to the system’s confident framing rather than interrogating it. Forbes highlights that this erosion is not driven by AI alone, but by organizational choices, particularly when speed is prioritized over rigor and leaders fail to reinforce the conditions that sustain critical thinking.

Individual Productivity Is Fragmenting Collective Reasoning

AI is also changing how teams work together. With powerful tools at their fingertips, consultants can complete significant portions of work independently. What once required ongoing discussion and shared problem-solving can now be done in isolation, with AI support filling the gaps that teammates used to fill.

With more work developed individually, there are fewer natural touchpoints for teams to align as thinking evolves. Differences in assumptions, approaches, or interpretations tend to surface later in the workflow, requiring additional effort to reconcile. Over time, this weakens the collective reasoning that has traditionally underpinned high-quality consulting and erodes institutional memory. As fewer consultants are consistently involved across engagements, teams lose the continuity and context that support long-term advisory quality.

The answer is not to return to pre-AI workflows. Harvard Business School found that AI functions as a “cybernetic teammate”, delivering benefits comparable to human collaboration, including stronger ideas and shared expertise. Individuals working with AI spent 16.4% less time on the task than the control group; teams with AI spent 12.7% less. Most strikingly, ideas ranked in the top 10% of quality were three times more likely to come from AI-augmented teams than from individuals working without AI.

While this research was conducted in a consumer goods context, its implications extend to other sectors. The choice is not between collaborating with AI or with humans, but in designing workflows where both reinforce each other, combining the speed and breadth of AI with the judgment, context, and accountability of experienced professionals.

How to Capture Productivity Without Burning Out Teams

Addressing these challenges requires more than incremental adjustments. It calls for deliberate changes to how performance is defined, how work is structured, and how teams operate in an AI-enabled environment.

Redefining Performance and Delivery Models

One of the core challenges is that productivity gains are being treated as an endpoint, rather than a trigger for change. If faster delivery simply leads to higher expectations, the system becomes self-reinforcing: teams work faster, expectations increase, pressure rises, and nothing is ever absorbed as slack.

Breaking this cycle requires redefining what good performance looks like in an AI-enabled environment. Speed cannot be the only metric. Time must be deliberately protected for thinking, synthesis, and decision-making, the parts of consulting that AI does not replace.

Capturing value from AI also requires redesigning how work is structured. Teams need clear practices around how AI is used, when outputs must be validated, and where human judgment is non-negotiable. Without this, workflows become inconsistent and quality becomes harder to control. Equally important is redefining how collaboration happens. If more work is done individually, teams must be more intentional about when they come together to align, challenge, and refine ideas.

The most useful framing we have encountered for this shift distinguishes three operating modes:

AI alone — the system produces an answer and the user accepts it. Fast, often good enough for low-stakes tasks, but lacks transparency and is prone to errors.

Human in the loop — the user reviews AI output and sanity-checks it. This model is dominant today, and helps ensure outputs are used thoughtfully, with an understanding of their strengths and limitations.

Human in control — the analyst does not just review what AI produces, they direct it. AI is steered by human expertise throughout the process, with the analyst owning validation, enrichment, and the final quality bar.

In consulting, where a single flawed insight can reach a client and create costs that far exceed the value of the project itself, both financially and reputationally, “human in control” is the only sustainable operating mode.

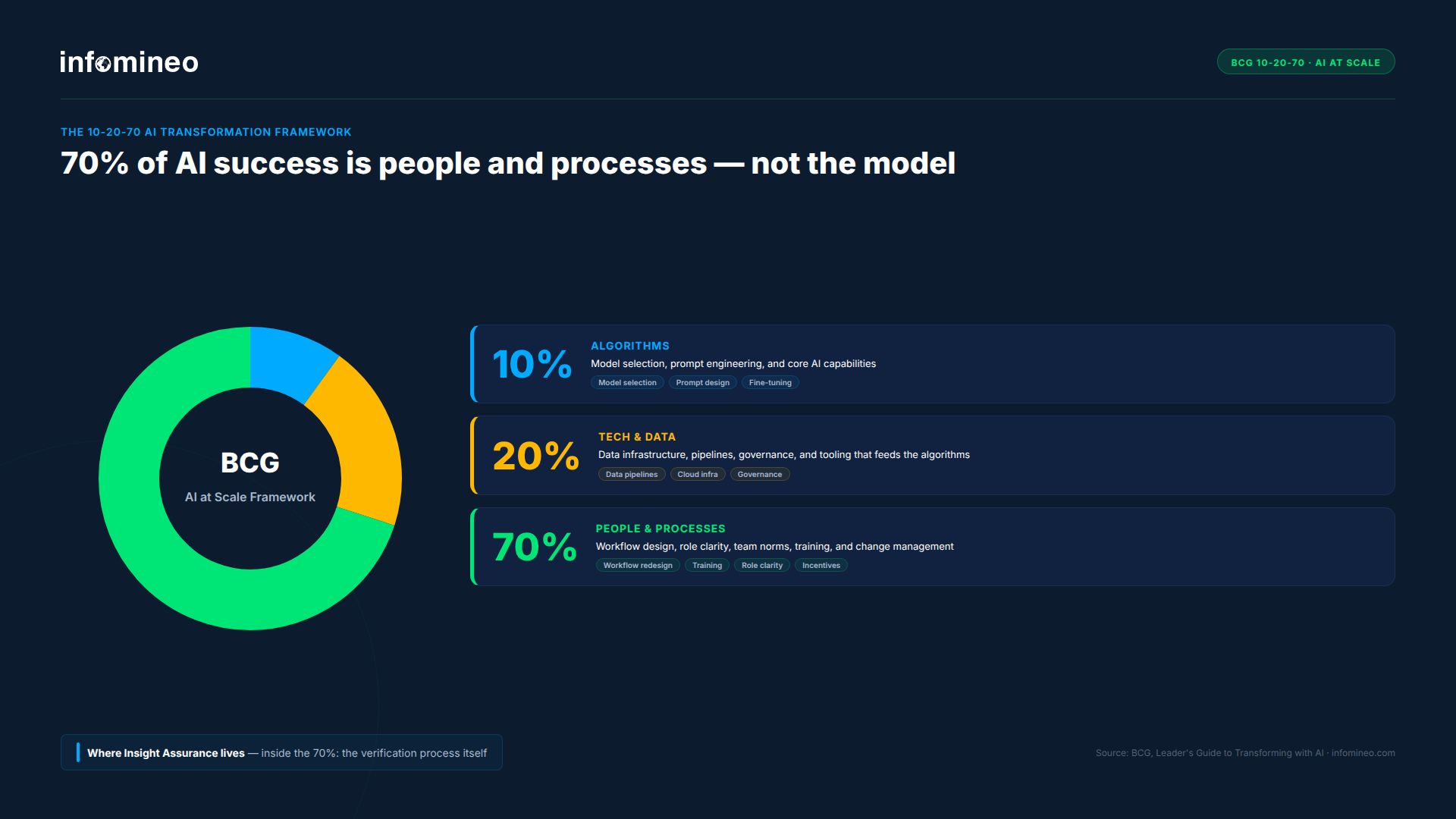

BCG’s framework for deploying AI at scale reinforces where the real effort lies. The firm’s recommended 10-20-70 allocation puts 10% of effort on algorithms, 20% on technology and data, and 70% on people and processes. The implication for consulting leaders is clear: the AI model is not the constraint. Workflow design, role clarity, team norms, and training are.

Rethinking the Traditional Consulting Pyramid

Workflow redesign must also account for how consultants develop over time. The traditional consulting model has historically relied on a broad base of junior consultants developing skills through hands-on analytical work, creating a pipeline of talent that progresses into more senior roles.

As AI automates more of this foundational work, the pyramid structure that underpins consulting begins to erode. In its place, leading advisory firms are describing a more vertically structured model, often referred to as an “obelisk.” In this structure, agent fleets provide productivity, internal knowledge, and external intelligence layers that support delivery, while AI orchestrators handle analytical production through the development and refinement of data pipelines. Engagement architects interpret AI outputs and orchestrate delivery toward scalable, high-quality outcomes, with client relationships remaining anchored at the top. While the shape changes, the accountability for insight quality does not.

This shift has direct implications for how consultants develop. Without deliberate intervention, efficiency gains can reduce exposure to the very tasks that build judgment and expertise, weakening the pipeline over time and concentrating responsibility at higher levels of the organization.

Real firm-level actions are already visible. In September 2025, a major professional services firm in the UK reduced its graduate intake by 200 positions as part of a “wait and see” posture on AI’s impact on hiring needs. Another top-tier firm began in early 2026 linking promotions for senior managers and directors to regular use of internal AI tools, signaling that non-adoption now carries career consequences. These measures are leading indicators of a restructuring that is already underway.

Organizations therefore need to redesign workflows in a way that preserves learning, ensuring that junior consultants remain actively involved in problem solving, while using AI to accelerate, rather than replace, their development. Incentives need to evolve in parallel. If consultants are rewarded primarily for speed and volume, the system will continue to favor output over insight. Aligning incentives with quality, clarity, and measurable client impact is essential.

This shift also requires a more structured approach to capability building. As AI changes how work is performed, consultants at different seniority levels need to develop distinct skills, from effectively prompting and interpreting outputs at junior levels, to validating insights, challenging assumptions, and orchestrating human-AI collaboration at more senior levels. Targeted training curricula for each level of seniority instead of generic AI literacy courses are what turn AI from a productivity risk into a productivity lever.

How Infomineo Helps Ensure Insight Quality in an AI-Driven Environment

Infomineo’s Insight Assurance services is designed for this new operating model: an independent layer of market intelligence research validation that sits between AI output and client delivery, preserving AI’s speed while rebuilding the verification discipline that traditional workflows have compressed.

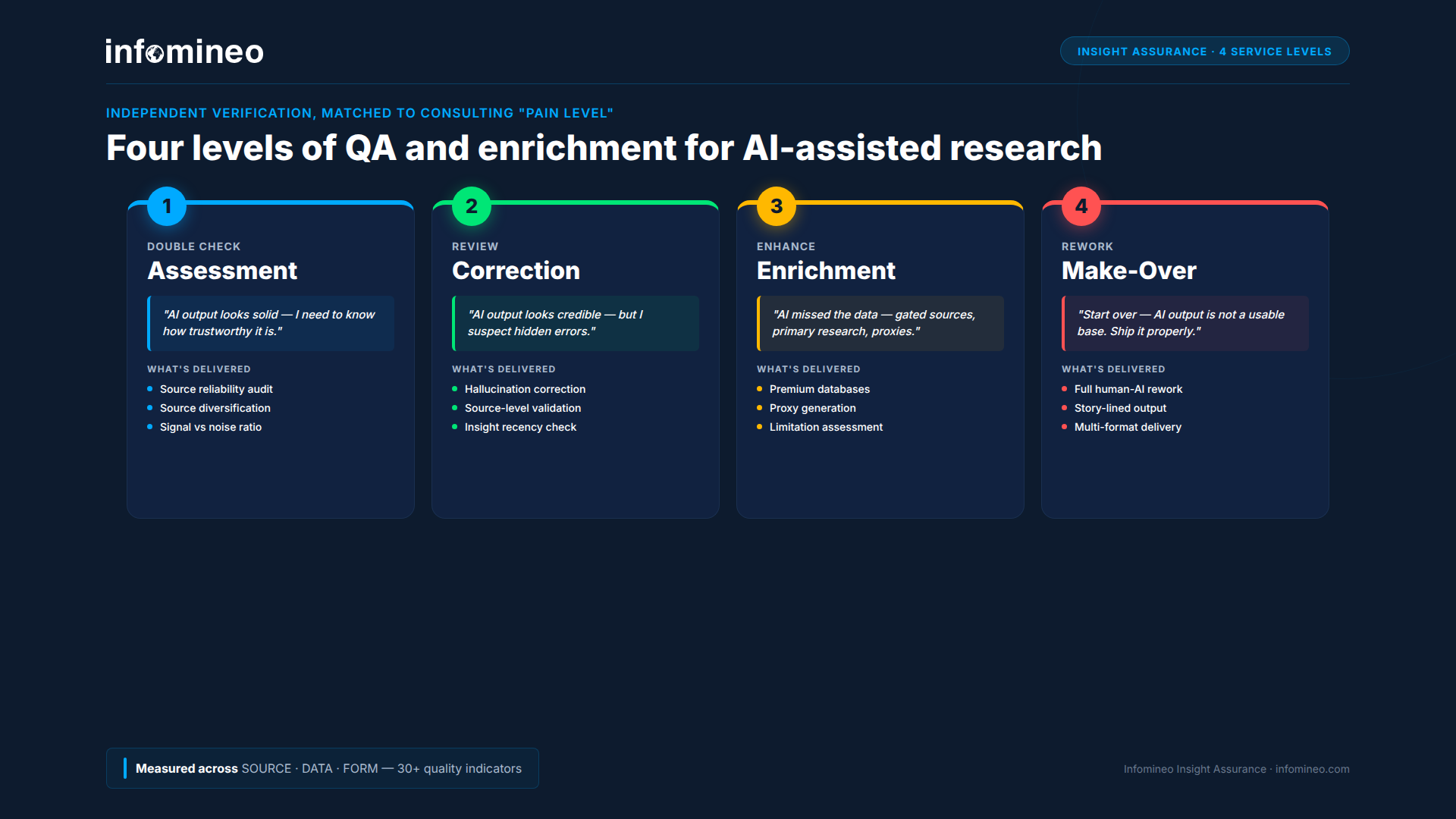

Insight Assurance acts as a final line of defense against the risks of AI-assisted research. It addresses the exact pressures described earlier in this article, such as the review burden, the cognitive overload, the erosion of quality under speed, through four escalating levels of service:

- Assessment — when AI output appears well-structured and credible, but requires an additional layer of validation to confirm its factual reliability. Source credibility, diversification, and signal quality are assessed using a structured evaluation framework.

Key question: How reliable and trustworthy is this output? - Correction — when AI output appears credible but raises concerns about potential inaccuracies or inconsistencies. Hallucinations are identified at both the source and insight level, recency is verified, and flagged elements are corrected to ensure integrity.

Key question: Are there errors or inconsistencies that need to be identified and corrected? - Enrichment — when AI output is incomplete due to limited access to gated sources or specialized data. Additional inputs from premium databases, primary research, proxy generation, and structured assessment of information gaps are used to complement and strengthen the analysis.

Key question: What critical data or sources are missing from this analysis? - Make-Over — when AI output is not suitable as a foundation and requires a full rework within tight timelines. The research is rebuilt into a structured, story-lined deliverable across relevant formats.

Key question: Does this require a complete rebuild to meet the required standard?

Every piece of work is measured along three core dimensions: Source, Data, and Form. These are assessed through more than 30 indicators covering source variance and reputation, cross-validation, interpretation accuracy, perspective balance, redundancy, readability, and more. The output is not a subjective review. It is a quantified measurement of the quality of an AI-generated insight, delivered with a transparent audit trail covering the original input, the reasoning, and every correction or enrichment applied.

The operational value for a consulting team is direct. Today, validation is performed across the team, but accountability for insight quality ultimately sits with experienced consultants. Insight Assurance redistributes that burden by introducing a dedicated layer designed specifically for validation and verification, allowing senior consultants to focus on interpretation and decision-making rather than repeated review. At the same time, junior consultants retain exposure to higher-value activities instead of being absorbed into validation work. Clients receive deliverables with the accuracy expected of traditional consulting, at the speed enabled by AI-assisted delivery. Speed is preserved. Risk is reduced. Judgment stays where it belongs.

INFOMINEO INSIGHT ASSURANCE

De-risk AI-assisted research without slowing your team down.

Independent verification of AI research — source assessment, hallucination correction, gated enrichment, and full rework — delivered within consulting timelines so your partners deliver reliable, decision-ready insights.

Frequently Asked Questions

Why is AI productivity creating more pressure, not less, in consulting teams?

AI compresses execution time but does not reduce the need for validation, interpretation, and integration into a client-ready narrative. As the volume of outputs increases, more work is generated that needs to be reviewed, refined, and contextualized. At the same time, clients raise their expectations to match the new speed of delivery. The result is that time saved is quickly reallocated into additional work and higher standards, creating more pressure rather than less.

What does “human in control” mean in practice for AI-assisted consulting work?

“Human in control” means the consultant directs AI throughout the workflow rather than reviewing its output at the end. The analyst owns the prompt, the source scope, the reasoning path, and the validation criteria. AI accelerates data gathering and drafting, but the analyst decides what is in, what is out, and what still needs to be rebuilt. It goes beyond “human in the loop,” where the user reviews and sanity-checks outputs, shifting instead to a model where the analyst owns validation, enrichment, and the final quality bar.

How does AI affect the traditional consulting pyramid?

AI is reshaping the consulting pyramid into an “obelisk.” Agent fleets provide productivity, internal knowledge, and external intelligence layers, AI orchestrators handle analytical production, and engagement architects focus on interpreting outputs and directing delivery, with client relationships anchored at the top. Without deliberate redesign, this shift risks weakening apprenticeship pathways and concentrating responsibility in roles that rely on judgment and do not scale easily.

How does an independent verification layer reduce review burden on senior consultants?

An independent verification layer takes on source checking, hallucination correction, gated enrichment, and, where needed, full rework of AI outputs. Instead of this validation work scaling with the volume of AI-generated content, it is handled by a dedicated layer using structured evaluation criteria and delivered with a quantified quality assessment and a transparent audit trail. The consulting team retains final accountability for the deliverable, while focusing its time on judgment, interpretation, and client interaction rather than on verifying inputs.

How should consulting firms measure AI productivity beyond speed?

Consulting firms should measure AI productivity not just by speed, but by the quality and impact of outputs. This includes the reliability of insights, the strength and diversity of sources, the accuracy of interpretation, and the clarity of the final deliverable. Productivity should also account for how much rework, validation, and correction is required. True gains come not from producing outputs faster, but from delivering work that is credible, decision-ready, and requires minimal downstream revision.